Why Thinking Frameworks Matter More Than Ever in the Age of AI

Artificial intelligence is making information easier to generate than ever before—but harder to evaluate.

If you have used AI tools recently—ChatGPT, Copilot, Gemini, or others—you have probably had the same reaction many people do: this technology seems incredibly knowledgeable. AI can write reports, summarize research, generate code, brainstorm strategies, and explain complicated topics in seconds. Tasks that once took hours can now take minutes.

But after the initial excitement fades, a quieter question begins to surface. How do we know whether the information these systems provide is actually correct? Or better yet, how do we trust ourselves with the output?

That question points to the real challenge of the AI era. The problem is no longer access to information. The Internet built the roads to information. Information is now abundant and instantly available. The real challenge is learning how to think clearly about the information we receive.

The learners and professionals who succeed in an AI-powered world will not simply be those who know how to operate AI tools. They will be the ones who know how to evaluate, question, and reason through the outputs those tools produce. In other words, success increasingly depends on judgment or taste rather than information access.

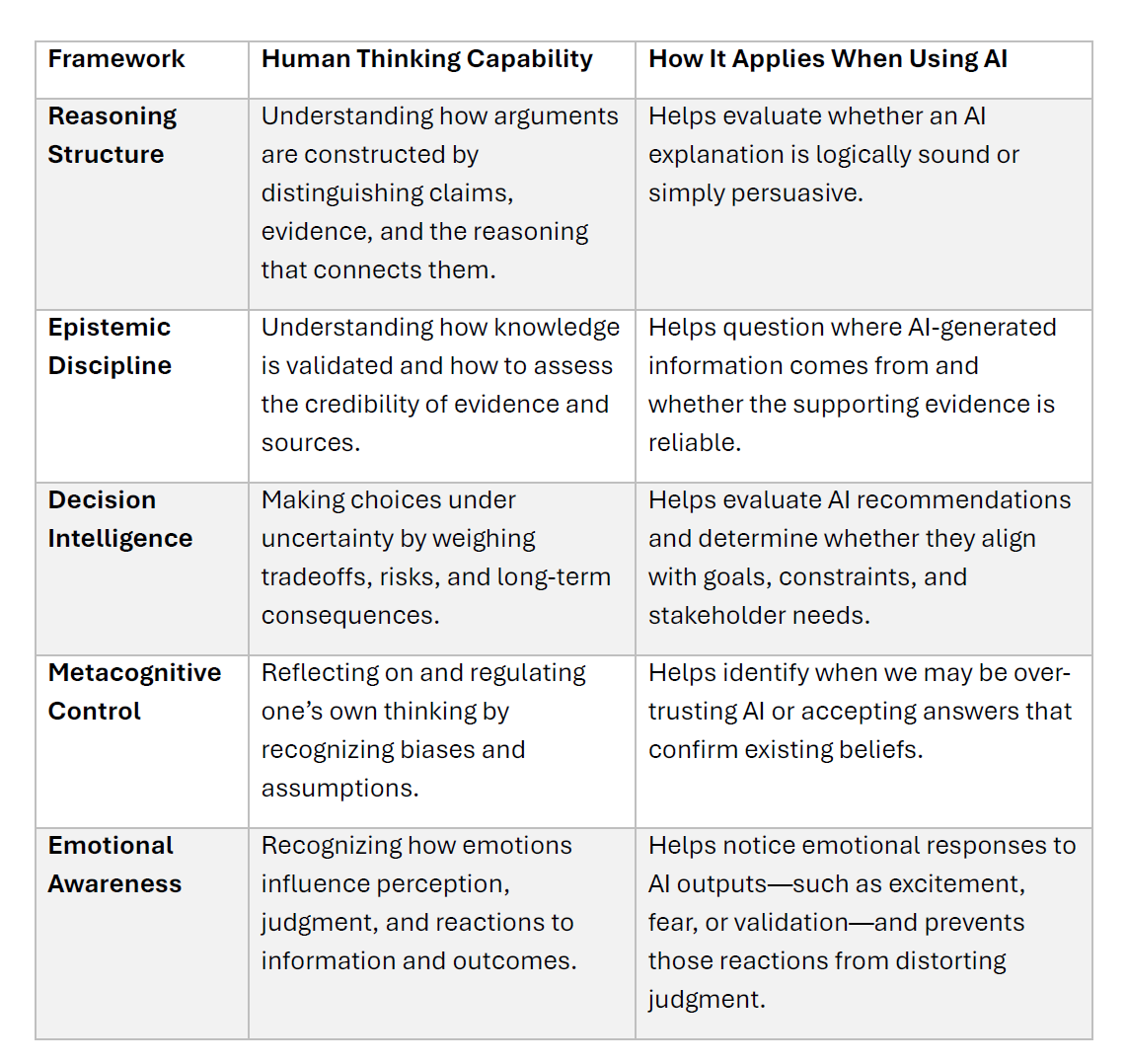

This is where foundational thinking frameworks become useful. They are not abstract academic ideas. They are practical ways of understanding how thinking works so that people can navigate complex information environments with more clarity. Five frameworks are especially helpful: reasoning structure, epistemic discipline, decision intelligence, metacognitive control and emotional awareness.

These thinking capabilities work together when professionals interact with AI. Reasoning structure helps us examine the logic of explanations. Epistemic discipline helps us evaluate the credibility of information. Decision intelligence guides how we choose actions under uncertainty. Metacognitive control helps us recognize biases in our own thinking. Emotional awareness reminds us that our reactions—confidence, fear, excitement, or resistance—can shape how we interpret information and make decisions.

Thinking Frameworks

Understanding How Arguments Actually Work

Imagine asking an AI system why remote workers might be more productive than office workers. Within seconds it can generate a polished explanation. It might reference studies, describe patterns in workplace data, and present a convincing narrative.

But the key question is not whether the explanation sounds convincing. The real question is whether the argument is logically sound.

Most explanations—whether written by humans or AI—combine three elements: claims, evidence, and inference. A claim is what someone believes to be true. Evidence is the information supporting that claim. Inference is the reasoning that connects the two.

When these elements blur together, it becomes easy to accept explanations that sound persuasive but are not actually strong. One common example is confusing correlation with causation. If companies with remote workers report higher productivity, that pattern alone does not prove remote work caused the improvement. Other factors could be responsible.

AI systems are excellent at identifying patterns, but pattern recognition is not the same as understanding why something happens. Knowing how arguments are structured helps us avoid mistaking a well-written explanation for a well-supported one.

Learning How Knowledge Is Verified

Many professionals now turn to AI for insights about markets, industries, or business strategies. The responses often sound confident and authoritative.

Yet an important question still remains: where did this information actually come from?

This question points to epistemic discipline, which simply means understanding how knowledge is validated. Not all evidence is equally reliable. Information may come from experiments, observations, surveys, expert opinions, or historical analysis. Each source has strengths and limitations.

In an AI-driven information environment, this distinction matters because AI systems present information very fluently. An explanation can sound convincing even when the underlying evidence is incomplete or uncertain.

Professionals who develop good epistemic habits naturally start asking different questions. They ask what kind of evidence supports a claim, who produced the information, and whether alternative explanations exist. These questions are not about skepticism for its own sake. They are about maintaining intellectual reliability in a world where information can be generated almost instantly.

Making Decisions When the Answers Are Uncertain

AI is increasingly used to help organizations analyze decisions. It can forecast trends, compare competitors, and simulate possible outcomes. That analysis can be extremely helpful. However, analysis alone does not determine what action should be taken.

Even when AI provides recommendations, human judgment is still required to weigh tradeoffs and consider broader consequences. Decision-makers must balance things like short-term results versus long-term risk, efficiency versus resilience, or profit versus stakeholder impact.

Strong decision-makers rarely optimize a single metric. They think about how different factors interact and how outcomes will unfold over time. They also consider how decisions will be monitored so adjustments can be made when new information appears. AI can expand the range of insights available to us. But the responsibility for choosing a direction still belongs to humans.

Recognizing How Our Own Minds Can Mislead Us

Even when we understand reasoning, evidence, and decision-making, there is another challenge: our own thinking.

Humans rely on mental shortcuts that help us process information quickly, but these shortcuts can introduce bias. Confirmation bias leads us to favor information that supports what we already believe. Anchoring causes us to rely heavily on the first number or idea we hear. Availability bias makes recent or vivid examples feel more important than they really are.

AI can unintentionally reinforce these biases. When people ask AI questions that already contain assumptions, the responses often align with those assumptions. When the output confirms what we expect, it can feel validating—even if the reasoning deserves closer examination.

Developing metacognitive awareness helps counter this tendency. Metacognition simply means thinking about how we think. It involves noticing when our assumptions may be shaping how we interpret information and being willing to update our views when credible new evidence appears. In many ways, this ability to reflect on our own thinking becomes one of the most valuable human skills in an AI-rich environment.

The Real Shift Taking Place

For much of modern history, education focused on knowledge acquisition. Learning meant gathering information and storing it for future use. Artificial intelligence changes this dynamic dramatically. Information is no longer scarce. It can be generated and accessed almost instantly. What becomes scarce instead is reliable judgment.

The professionals who stand out in the AI era will not simply be those who produce information quickly. They will be those who can evaluate arguments, assess evidence quality, make thoughtful decisions under uncertainty, and recognize when their own thinking needs adjustment.

Thinking frameworks such as reasoning structure, epistemic discipline, decision intelligence, emotional awareness, and metacognitive control help build these abilities. They act almost like a mental operating system for navigating a world where intelligent systems generate more information than any person could process alone

A Final Reflection

Artificial intelligence is transforming how we work, learn, and solve problems. But it does not eliminate the need for human thinking. If anything, it makes thoughtful reasoning even more important.

In a world where intelligence and knowledge can be generated instantly, the real advantage belongs to those who know how to evaluate it.